Something Crossed a Line This Week.

Not the usual "model got smarter" line.

The line where AI stops being something you talk to and starts being something that uses your computer — your mouse, your apps, your workflows — while you watch.

GPT-5.4 now operates desktops. Anthropic shipped a tool that tracks which jobs are hollowing out in real time. The hidden debt of AI-generated code is getting harder to ignore. And underneath it all, one question nobody's answering well: when AI writes the code, what exactly does an engineer become?

No panic. No hype. Just the clearest read on where the human-AI boundary moved this week.

GPT-5.4's big leap isn't smarter answers. It's agency.

Your Desktop Has a New Operator.

It sees your screen. Moves your cursor. Clicks buttons. Switches apps. Fills forms. Extracts data from one tool, pastes it into another. Not inside a sandbox. On your actual desktop.

Describe a task — "Pull last month's revenue from the dashboard, drop it into the board deck, update the chart, email the PDF" — and it executes. App by app. Click by click.

Previous agent demos needed custom API integrations. GPT-5.4 just uses your tools the way you do. No integration required.

The honest take: It's still slow. Unfamiliar UIs trip it up. You wouldn't hand it your most critical workflow unsupervised — yet. But that "yet" carries a lot of weight. The repetitive, screen-based, pattern-following tasks in your day? Those have a timeline now.

AI Code Is Fast. The Debt It Creates Is Faster.

Here's the part the "AI writes 30% of our code" headlines skip.

AI-generated code ships quicker. It also breaks differently. And the cost is showing up where nobody's measuring.

Review overload. Every AI-generated line still needs human eyes. But as output volume spikes, review quality drops — not from laziness, from cognitive overload. The bugs that slip through are subtle and expensive.

Architecture drift. AI writes code that works locally — this function, this file. It doesn't understand the broader system. Over months, locally-correct-but-globally-misaligned code accumulates into structural debt that's brutal to unwind.

False confidence. Code that appears instantly and passes basic tests gets trusted more than it should. Testing bars drop. Edge cases vanish. Works in demo, breaks in production under conditions the AI never considered.

AI code generation isn't overrated. It's under-audited. The teams winning are the ones who tightened review rigor because of AI — not the ones who relaxed it.

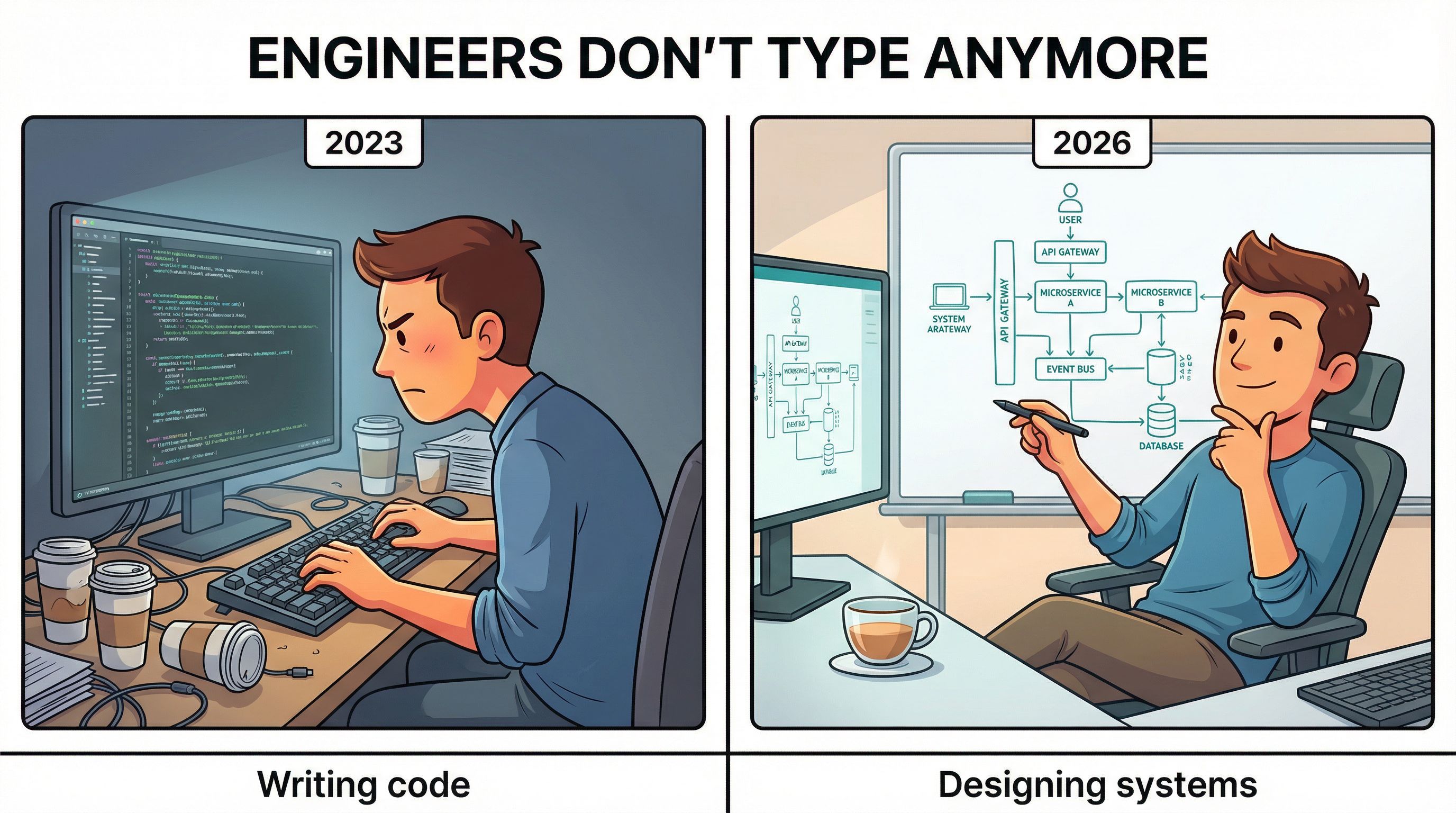

The Engineer's Job Title Stays. The Job Doesn't.

The Engineer's Job Title Stays. The Job Doesn't.

If AI operates desktops, writes a third of production code, and knowledge tasks are hollowing faster than expected — what's the engineer's actual role in 18 months?

More than you'd think. But unrecognizable from today.

The shift is from implementer to architect and auditor. The skills that compound:

System design. AI generates components. Humans design the system those components fit into — where failures cascade, which trade-offs matter at scale, how pieces interact under stress.

Problem framing. AI solves what you define. Defining the right problem is getting more valuable by the week. Most failed AI implementations didn't fail at execution. They failed because someone automated the wrong thing.

Taste and judgment. Code that works isn't code that's good. Knowing what "good" looks like — maintainable, elegant, aligned with product direction — is a human skill that AI makes more important, not less.

Translation. The ability to sit between business stakeholders and technical systems, interpreting needs in both directions, is the one skill every AI advancement makes more scarce.

The engineers who thrive won't write more code. They'll write less — and make every architectural decision count.

Building AI That Makes Developers Happier, Not Redundant

Not every AI-for-developers story is about replacement.

A growing wave of tools is designed around a different thesis: developer joy. The idea that AI should eliminate the parts of engineering that nobody enjoys — boilerplate, config, dependency management, repetitive testing — while amplifying the parts people love.

Tools like Cursor, Copilot, and Claude Code aren't trying to replace engineers. They're trying to make engineering feel like it did when you first fell in love with building things — before 70% of the job became yak-shaving.

The best implementations share one pattern: they keep the human in the creative loop while automating the mechanical loop. You think about what to build. AI handles how to scaffold it. You review, refine, direct. AI executes the tedious parts.

The risk is when companies use these tools to cut headcount instead of increase capability. The opportunity is when they use them to let smaller teams build things that previously required 50 people.

Same technology. Opposite outcomes. The difference is leadership intent.

→ Cursor — AI code editor that autocompletes entire functions, refactors across files, and explains unfamiliar codebases in natural language. The daily driver for most AI-forward engineers right now.

→ Claude Code — Anthropic's terminal-based agent. Reads your codebase, runs commands, writes code, creates subagents for complex tasks. Feels like a senior engineer pair-programming with you.

→ Bolt.new — Describe what you want in plain English. Get a deployed, working app. Best for MVPs and prototypes where speed matters more than custom architecture.

→ Replit Agent — Full-stack AI developer inside a browser IDE. Builds, deploys, and iterates from conversation. Surprisingly capable for non-engineers building internal tools.

→ Devin (Cognition) — The most autonomous AI software engineer in the market. Handles multi-step engineering tasks end-to-end. Still early and expensive, but the closest thing to a self-directed AI developer.

The pattern: Every major AI coding tool is converging on the same model — human sets direction, AI handles implementation, human reviews output. The tools differ in how much autonomy they give the AI and how much control they give you.

Your Weekend: Build the Skill That Compounds

Here's your weekend practice:

☐ Frame one problem properly. Take something you'd normally jump straight into building. Instead, spend 30 minutes writing: What's the actual problem? Who has it? What does success look like? What should we explicitly NOT build? This is the skill that separates architects from implementers. 30 min.

☐ Audit your AI-generated code. If you used Cursor or Copilot this week, go back and review what you accepted without thinking. Find one assumption the AI made about your system architecture that was wrong. That pattern will repeat — catching it is the new core skill. 20 min.

☐ Try one new tool from Slide 7. Pick one you haven't used. Give it a real task, not a toy prompt. Notice where it's brilliant and where it needs you. That gap is your job description for the next two years. 45 min.

☐ Map your week against the hollowing. List every task from your last five workdays. Mark each one: "AI could do this now," "AI could do this in 12 months," or "AI can't do this." Be honest. The third column is where your career investment goes. 15 min.

The people who stay ahead aren't the ones using AI hardest. They're the ones who know exactly where AI stops and they start.

Do the audit. Find the line. Build on the right side of it. →

🐝 One Last Thing...

Thanks for reading! If you found this deep dive valuable, don’t keep the alpha to yourself. Knowledge is the only asset that grows when you share it.

Forward this to someone you actually like.

See you in the next one